Who guards the guardians? NewsGuard's quest to clean up online news

The Media Leader Interview

NewsGuard CEOs Steven Brill and L Gordon Crovitz explain how programmatic advertising is funding Russian propaganda and healthcare hoax sites, and why social media platforms don’t want to use their services to stop its spread.

Picture walking into a library today: books are arranged on shelves according to subject matter; magazines and newspapers are neatly ordered; you can pick up a book and see from the book jacket who the author and publisher is; best of all, a librarian is there to guide you through the browsing experience with additional information as needed.

Now picture walking into a different library: there are no shelves, there are no librarians, and instead a trillion pieces of paper are flying around. You pluck one out of the air. You don’t know who wrote it, who financed it, or what their credibility is.

According to Steven Brill and L Gordon Crovitz, founders and co-CEOs of NewsGuard, that is the internet. And for advertisers, that is programmatic advertising.

NewsGuard was created in 2018 as an online tool for rating the credibility of all the news and information sites responsible for at least 95% of engagement in a given country. The goal was to address the lack of tools for consumers, advertisers, brands, search engines, cybersecurity firms, and government intelligence agencies in identifying sites that spread blatant disinformation and misinformation on the web.

The founders are both accomplished journalists: Brill has launched a number of publications, including The American Lawyer and Journalism Online, and founded CourtTV (now TruTV) on top of being a former Newsweek and Reuters columnist; Crovitz is a former editorial writer for and later publisher of The Wall Street Journal, and VP planning and development at Dow Jones; he also led the creation of and chaired Factiva while at the company.

The duo has hired a large team of journalists to assist NewsGuard in its mission, which has now expanded beyond the US to include the UK, Canada, France, Germany, and Italy, with Austria, Australia, and New Zealand launching in the near future.

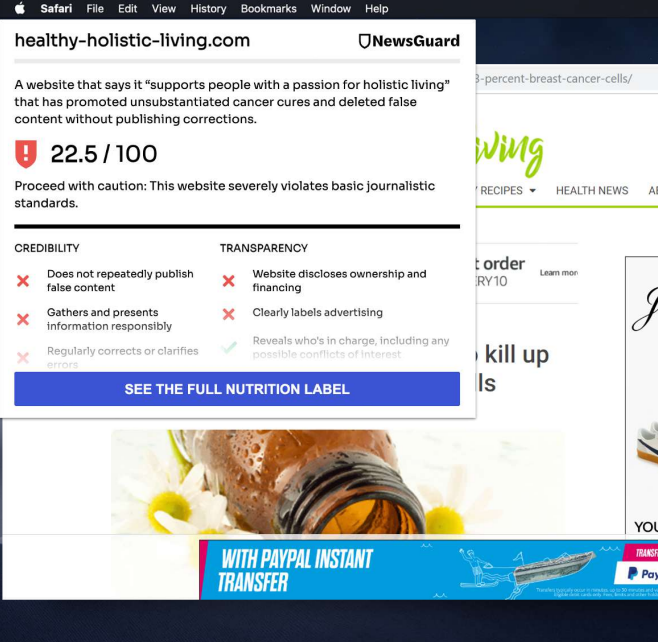

The company uses “human intelligence” (as opposed to artificial intelligence) – real employees – to provide ratings to each site based on a set of criteria it says is proven to give a fair analysis of a site’s credibility. The list of criteria, which each have different weights as they contribute to an overall score out of 100, are as follows:

– Does not repeatedly publish false content (22 pts)

– Gathers and presents information responsibly (18 pts)

– Regularly corrects or clarifies errors (12.5 pts)

– Handles the difference between news and opinion responsibly (12.5 pts)

– Avoids deceptive headlines (10 pts)

– Discloses ownership and financing (7.5 pts)

– Clearly labels advertising (7.5 pts)

– Reveals who’s in charge, including possible conflicts of interest (5 pts)

– Provides names of content creators and their contact or biographical information (5 pts)

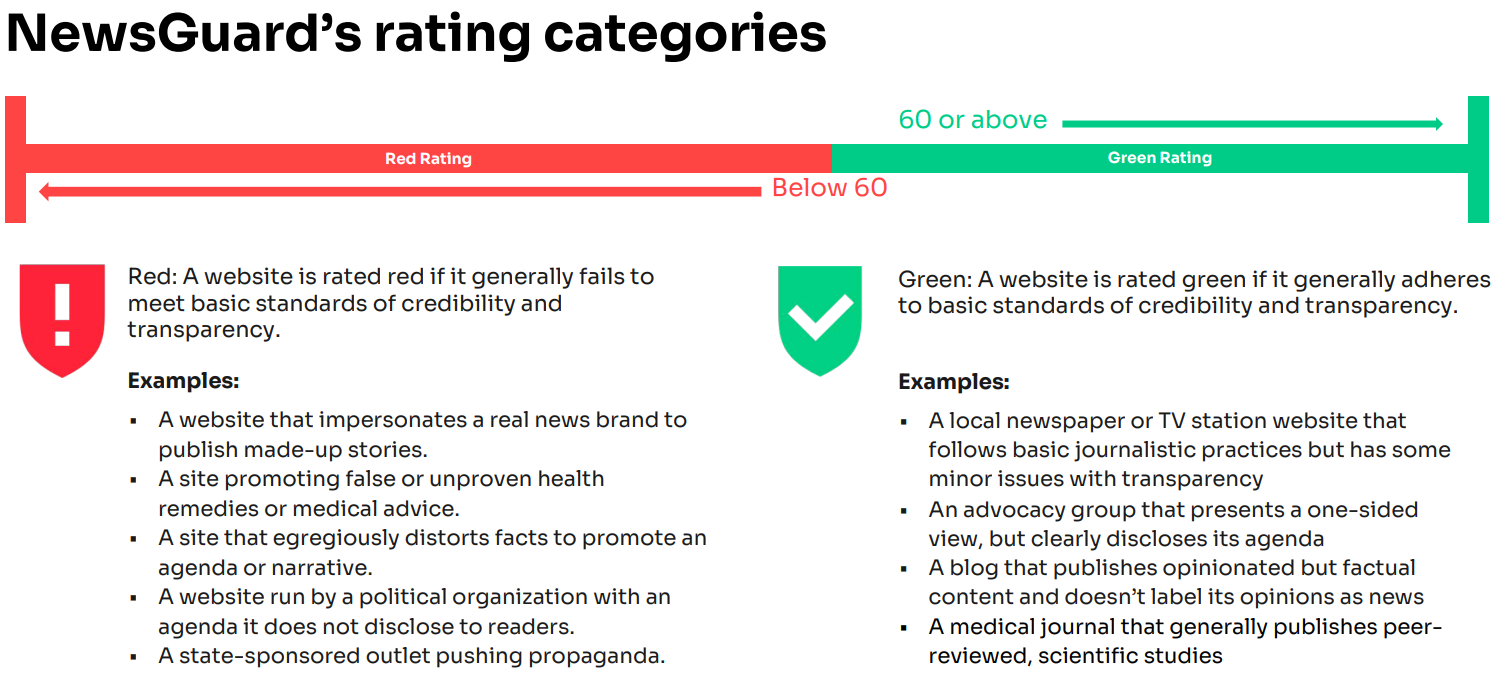

Following an initial assessment from a NewsGuard analyst wherein the analyst drafts a report they dub a “nutrition label” for the given site, the company attempts to open a dialogue with the site in question to provide them the opportunity to respond and improve their rating, before being viewed by a fact-checker and editor ahead of publishing. Ratings, which are broadly categorized as “green” (above 60 pts) and “red” (below 60 pts), are then periodically reviewed.

Though NewsGuard initially considered a non-binary approach to their categories, they felt adding a third (“yellow”) tier would ultimately be more complicated, and they note that advertisers use the more detailed point scores and nutrition labels to customize their campaigns anyway.

Major ad holding companies Publicis, Omnicom, and IPG already partner with the company, and a host of other partners such Pubmatic, TripleLift, Comscore, Zefr, and Giphy are also signed on.

In addition to their ratings, NewsGuard employs numerous journalists to track disinformation and misinformation campaigns around the globe, such as in Russia following their invasion of Ukraine, and throughout the global Covid-19 pandemic.

From their growing dataset, NewsGuard provides distinct information to their different groups of clients, broadly categorized as consumers (through a browser extension), advertisers (through robust inclusion and exclusion lists and other data), and intelligence and defense agencies.

According to 2019 Gallup research, large majorities of NewsGuard users would be less likely to read or share news from unreliable sites. In other words, their tool appears to work.

‘AI cannot tell the difference between a Russian propaganda site and a reliable source of news’

Brill and Crovitz, speaking to The Media Leader over a Zoom call, described how programmatic advertising has unintentionally funded, to their and Comscore’s estimation, $2.6bn to hoax sites.

“For brands, until now they didn’t really have an effective tool to keep their ads off of sites like Russian disinformation sites,” says Crovitz in his measured and calculated cadence.

“And I think many of them had assumed that their legacy brand safety provider was providing that service, but they can’t, because artificial intelligence […] cannot tell the difference between a Russian propaganda site and a reliable source of news.”

In a prescient January 2020 New York Times op-ed, Crovitz wrote that the single biggest advertiser for Sputnik News, a Kremlin-backed propagandist site, was Warren Buffett, through programmatic ads from Geico Insurance.

“But it’s not like Warren Buffett wakes up in the morning and says, ‘I wonder how I can send Putin some money today,’” jokes Brill, who wears an outfit out of 1980s Wall Street, suspenders and all. It was unclear if he was holding a remote or a cigar in his right hand during the early-morning interview with The Media Leader.

On 2 March, as part of the EU’s sanctions against Russia, the European Commission issued a prohibition against serving ads on Sputnik News as well as Russia Today. But NewsGuard has found 181 additional sites publishing Russian disinformation about Ukraine, many of which carry programmatic advertising, including ads from virtually every blue-chip company, according to Brill and Crovitz.

But political propaganda, while an increasingly large issue, does not make up the highest percentage of red sites – that honor goes to healthcare hoax websites, which have only become more salient during the pandemic.

Initially stunned by the high percentage of “reds” that were engaged in healthcare hoaxes, Brill and Crovitz came to understand that the reason there are so many is because, thanks to a high degree of traffic on those sites, that’s where the money is.

And the problem is exacerbated because in the advertising world, those sites are typically categorized as health and beauty rather than news, keeping them away from greater scrutiny when advertisers create exclusion lists.

‘Facebook doesn’t want any part of that’

But it is not just hoax sites or state propaganda that is the cause of so much strife online, it is also the platforms that magnify its spread.

“It seems that everybody has a crazy Uncle Billy,” says Brill.

“So even if your own news diet is pretty healthy, there’s going to be material in virtually everyone’s Facebook feed or Twitter feed from crazy sources that are being shared by people who don’t realize they’re spreading falsehoods.”

Perhaps the most influential segment of the internet – social media – has been reluctant to work with NewsGuard. Upon its founding, the company’s original prime target was Facebook.

Brill explained that when they were developing NewsGuard in 2018, they approached Chief Product Officer at Facebook Chris Cox, to inquire about the potential for collaboration. The initial reaction was highly positive, as Brill describes.

“[They] were so enthusiastic about how we could solve a problem they hadn’t been able to solve – not by blocking anything, but by giving people information through these nutrition labels – that they actually in one case talked to one of our investors to encourage them to invest.”

But NewsGuard was suddenly turned away.

“Unfortunately, we did not talk to the number one and number two people at Facebook, who vetoed the whole idea.”

Facebook, now known as Meta, has come under intense scrutiny in the past year for allegedly allowing hate speech, misinformation, and other threats to the public to go unchecked on their platform.

A spokesman for Facebook highlighted that the company currently partners with more than 80 independent, certified fact-checking organizations, and actively reduces the distribution of content that contains false information or removes it (and the accounts that spread it) altogether in the case of “the most serious kinds of misinformation”, such as about Covid-19 or content that is meant to suppress voting.

According to the pair, NewsGuard has also been in discussions with Twitter and LinkedIn (Microsoft, who owns LinkedIn, has an extant relationship with NewsGuard – their Edge browser comes pre-installed with the extension) about implementing NewsGuard’s data into their platforms, though no commitments appear to have been made at present.

NewsGuard receives enough interest from advertisers, agencies, and other tech companies to more than cover their costs, even without deals with major social media platforms, but Brill and Crovitz stressed that there is an ethical responsibility that is being abdicated by not using NewsGuard or comparable data from other watchdogs.

“There’s no getting around the fact that Facebook should use us, but for the fact that what we now know from all the whistleblowers: that using us would destroy their business model,” says Brill.

Crovitz adds: “A very high percentage of Facebook users, if they had our browser extension, they’d see that a large part of their news feed is from unreliable sources.”

“If Facebook implemented us at the source, they’d see the same thing” interjects Brill.

“Facebook doesn’t want any part of that.”

According to the Gallup study, there is demand for their product on platforms such as Facebook – eight in 10 participants say they would like to see social media and search companies incorporate NewsGuard, and nearly seven in 10 would trust these companies more if they include NewsGuard in their products.

But the pair say it is unlikely that any site or platform whose business model depends on attention and engagement will employ them unless governments step in to mandate greater responsibility.

As Crovitz says, “We don’t think government ought to be censoring anything – we don’t think government ought to be having anything to do with content at all – but we also don’t think that the platforms should be continued to be exempt from any legal responsibility for the harms they’re causing, especially when there are so many solutions in virtually every area of known harm.”

As part of a greater increase in concern for online safety, governments in the UK and EU have been beginning to apply pressure to platforms to start allowing third-party “middleware” solutions to give consumers access to better information about the content they’re exposed to. NewsGuard is a member of OSTIA, a UK trade organization for companies operating in the field of online safety that specialize in these types of solutions.

In October 2020, NewsGuard put out a position paper arguing that Section 230 of the Telecommunications Act in the US, which created blanket immunity for online platforms from responsibility for content published on their sites, could easily be reformed.

Their proposal would require that providers of interactive computer services maintain “good faith attempts to fulfill its responsibility to block or screen potentially harmful content or provide information to users that such content may be harmful” in order to escape liability.

In Brill and Crovitz’s mind, such a change would meet the original intent of the law.

“The digital platforms are the only industry in the world that are told that they’re not going to be held accountable for the easily foreseeable harms that they cause,” says Crovitz.

“Imagine if a chemical company or an oil shipping company was told, ‘don’t worry about any liability that you cause, just carry on.’ […] It’s no surprise that an industry that was told it was not going to be accountable for its actions turns out to be irresponsible.”

‘We don’t ask permission to rate somebody’

Brill and Crovitz (pictured, below) were also apt to point out additional benefits to advertisers, beyond ethical responsibilities, of using NewsGuard’s inclusion and exclusion lists – for instance, advertisers might be made aware of reliable sites that serve underrepresented communities that they had not previously heard of.

Meanwhile, sites that they may have previously thought were reliable may not be entirely up to snuff on particular criteria they would rather not be supporting with ad revenue.

“When we engage with a client on an inclusion list, typically the client has a list of 400 or 500 news and information sites that they say are legit,” says Crovitz.

“We will audit that, and it’ll turn out that a couple dozen don’t belong there, […] but more important, we will add another 1,000 sites that they’ve never heard of.”

And NewsGuard will rate any site that asks for it, including The Media Leader, which has been added to their queue at no cost.

“But obviously we don’t ask permission to rate somebody because InfoWars is not likely to sign up,” cracked Brill.

‘Nobody knows nothing’

It is difficult to achieve a poor rating on NewsGuard as only exceptionally bad sites trafficking blatant misinformation are hit with a “red”. For example, FoxNews.com, which often draws criticism from the left and center and which NewsGuard admits publishes “news and partisan opinion that often promotes the Trump administration”, received a “green” label, with a score of 69.5/100.

Yet a staggering 38% of all websites NewsGuard has rated have been given the red label.

Brill and Crovitz argue that advertisers have the unique responsibility to fund sites of high quality, but through the ignorance enabled by use of programmatic ads, they have failed to understand what greater societal issues they are contributing to.

“The irony is CMOs and CEOs and socially responsible board members of companies, when they see their ads on Russian disinformation sites or healthcare hoax sites, they’re aghast. As they should be,” says Crovitz.

“If we can dramatically reduce the $2.6bn per year being wasted on disinformation sites via programmatic [advertising], that would be great for democracies around the world, and it would save advertisers money.”

They lamented that brands today are still unaware of the size and scale of the issue.

As Brill says: “Everything about this is the wild west, still, even though it’s now an $80bn industry. Basically, it’s like the old Hollywood cliché: nobody knows nothing.”

Though it cannot hope to know everything, NewsGuard is certainly trying to know something.