Standing up to scrutiny: the Radio Planning Optimiser

Opinion

Radiocentre’s planning director responds to a recent review of the radio marketing body’s new Radio Planning Optimiser tool.

At Radiocentre, we welcome thoughtful analysis of the research and effectiveness tools we produce. We firmly believe that open and honest public debate about our work within the broader context of advertising/media effectiveness understanding will help lead to more effective planning choices.

In this context, I’d like to thank VCCP Media’s Steve Taylor for his deeply considered and constructive criticism of the Radio Planning Optimiser.

Firstly, Taylor highlights how, against the backdrop of very little accessible benchmarking data for jobbing planners, the Radio Planning Optimiser (“the latest addition to a bunch of great Radiocentre radio planning tools”) is a very welcome addition to the planner’s tool set. He values how the tool is based on a big dataset and enables planners to use “four killer variables” to source the most relevant data to help optimise their campaign weights. Taylor also notes how “the interface is a doddle to use, and the graphs produced are well-presented and downloadable”.

Summarising, Taylor acknowledges the value of the tool to anyone seeking “to support investment in radio, adding radio to a campaign, or looking for data that supports radio as a brand-building medium.” In doing so, he places the Radio Planning Optimiser alongside other respected planning aids “we need more tools like this across all media to sit alongside those like the IPA’s ARC project.”

Now, it’s not often that a chief strategy officer at a major media agency reviews and publicly praises media owner effectiveness research in this way. So rare, in fact, that — to borrow a turn of phrase from Taylor’s original review — I feel like a real ingrate for feeling compelled to respond to the factual questions flagged within his analysis. But this is an essential element of peer review, so I’d like to thank Taylor for raising these questions and enabling me to add clarity to the current explanations featured within the tool.

Radiogauge stands up to the ‘sniff test’

Firstly, Taylor questions the method used by Radiogauge to establish the effectiveness of radio campaigns, quoting the Rosser Reeves Fallacy. Now, I’m in full agreement with Taylor about the often misleading correlations that result from using Advertising Recognisers as a base for demonstrating advertising effectiveness, but they simply don’t apply to Radiogauge.

Reading further down the linked Rosser Reeves Fallacy article, the authors assert: “The right way to deal with (all these) spurious correlations is by exposure. Controlled exposure tests take more time, effort and money but they produce more accurate and sensible results.”

This approach of comparing metrics between demographically matched exposed/unexposed samples (i.e. commercial radio listeners vs. non-listeners, in our case) is the method that Radiogauge has used across the last 14 years to isolate the effects of radio advertising campaigns within a wider media mix.

To limit spurious correlations further (i.e. the effect of pre-existing brand favourability on outcomes, ref: Rosser Reeves Fallacy), the exposed and non-exposed data is weighted according to each set of respondents’ history of brand experience. Over the years, this Radiogauge methodology has stood up to the “sniff test” from research teams at a whole range of major advertiser companies and their agencies, all of whom who take stress-testing media owner research very seriously!

Why the optimiser focuses on ad awareness

Secondly, Taylor’s review also interrogates the Radio Planning Optimiser’s focus on ad awareness as the measure of campaign effectiveness, describing it as “a means but not a useful end goal for advertisers”.

This isn’t unreasonable and we wouldn’t advocate that advertisers always make ad awareness their primary concern when planning radio campaigns. Rather, we use ad awareness as a sensitive measure of/proxy for wider campaign cut-through and brand effect.

In this context, Taylor also flags how ad awareness is an upper-funnel metric, questioning the relevance of the planning tool outputs (i.e. focus on maximising weekly reach over building frequency) for shorter-term sales-led campaigns. This is a fair question, but thankfully we can turn to another big data analysis to help answer it: Radio, the ROI Multiplier is a meta-analysis of short/medium term Revenue ROI data derived from over 2,000 individual uses of different media across 517 separate advertising campaigns.

So what does it tell us? Well, quite simply, the evidence doesn’t really support his assertion: “If your campaign is all about nudging sales today. That takes you back to an in-market audience and a frequency play.”

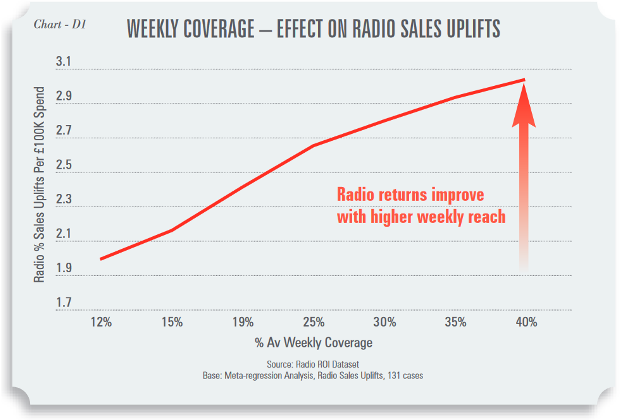

The ROI Multiplier analysis was conclusive: in radio advertising, the impact of weekly reach on sales is far stronger than that of frequency. When weekly reach data was modelled against sales uplifts from radio, results demonstrate significantly improved returns at higher reach levels (see chart below).

This establishes unequivocally the importance of maximising reach over frequency to optimise the effects of radio advertising throughout the marketing funnel.

Rounding off, I hope that Taylor’s review and this response will encourage The Media Leader to lead further peer reviews of media research and the implications thereof.

It’s indisputable that public debate around research claims, allowing only the strongest to come through unscathed, must ultimately be good news for everyone within the media industry —especially those responsible for facilitating better-informed and more effective media planning decisions.

Ultimately, this is what we all want, isn’t it?

Mark Barber is planning director at Radiocentre, the marketing body for UK commerical radio

Mark Barber is planning director at Radiocentre, the marketing body for UK commerical radio