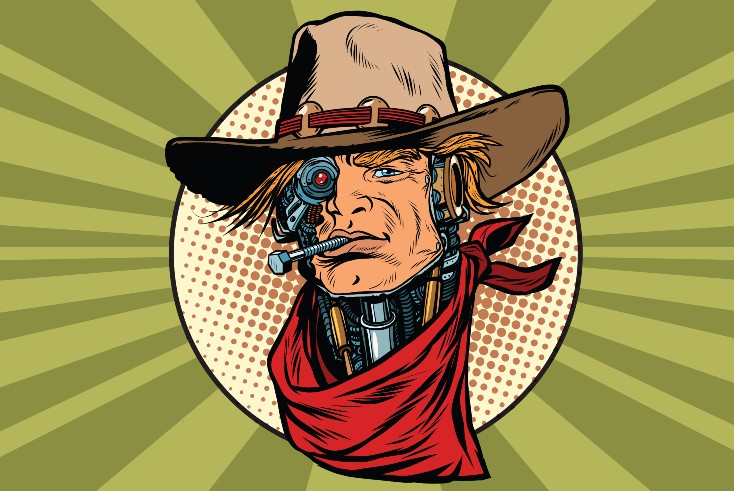

We must avoid an 'AI Wild West'

Opinion

We are only now beginning to regulate the negative manifestations of the internet. That mistake should not be repeated with generative AI.

Artificial intelligence has been with us in some form or another for decades. Generative AI, as they say in the trade, the sort that can come up with an essay or a plausible Times leader in 10 seconds has burst on us like a tsunami, or more aptly a rapidly multiplying virus, only in the past few weeks.

With existing rapid modes of communication it has already entered the mainstream from a standing start.

The evidence from the past few days is overwhelming. In Saturday’s Sun there was a smart feature on the subject by Dr Mansoor Ahmed-Rengers, founder of an internet tech company OpenOrigins. It was devoted to the pressing question: will AI take my job? The piece revealed that a survey for The Sun found that 68% of Brits had already begun to feel worried about Al and not many fewer foresaw devastating consequences for humanity.

The headline came close to summing up the emerging consensus: “AI is not all bad. Like the internet it will cost jobs as well as create them.

We must watch it like a hawk.”

‘The AI Wild West’

In fact, Dr Ahmed-Rengers went further and warned that the tech firms designing these technologies were being driven by market forces, usually admired by The Sun, rather than what’s best or right for the British people, or the world.

“So we live in the AI Wild West,” he said.

Well done to the Sun for getting unto such an important issue so relatively early — although it would be nice if it were to deploy such vigour on some of the other malign viruses afflicting society (such as Brexit).

By Monday, Good Morning Britain was starting to dig its way out of its own Philip Scofield-Holly Willoughby soap drama with a decent focus on the issue. The programme provided an excellent introduction to what it might all mean, although Susanna Reid was a lot quicker off the mark than Richard Madeley.

Why AI is ‘drastically improving’ the effectiveness of media planning

Evidence on how serious the threat to the media, and the advertising industry in particular, plus the pandemic speed of the spread, comes from NewsGuard, the body that awards credibility ratings for thousands of news websites and television channels.

The organisation has just identified no less than 125 news and information websites now generated by AI with little or no human oversight.

The really scary thing is that the number of such sites had more than doubled in the past two weeks at the time of their research. Exponential growth seems to be guaranteed, and the economic model seems to be to churn out dozens if not hundreds of poorly written articles complete with false claims, celebrity death hoaxes, old news presented as new and fabricated events.

Their hope then, according to NewsGuard, is to use generic site names such as iBusiness Day or Ireland Top News to attract programmatic advertising revenue.

Serious advertisers will want to avoid such sites like the plague.

In the wrong hands

These sites are of course an international phenomenon with such “news” sites already identified in ten languages, including English.

The potential for dangerous mayhem is alarmingly obvious. There are medical sites promoting natural health remedies for cancer, or curing skin allergies with lemon juice. And that is before we get to politics and the endless possibility for cheaply generated misinformation.

Why AI is different now: we’re the ones being trained, not the machines

AI has already provided better early diagnosis of some cancers than most clinicians but in the hands of bad actors the possibilities are frightening.

In at least a first step forward, NewsGuard is trying to set an industry standard for defining a site as an Unreliable AI-generated News site, or a UAIN site. The criteria would include sites where content is generated without editing or significant human oversight and that does not clearly disclose that it is using AI material.

Clear responsibility to warn

The impact for journalists and journalism is less clear so early into this revolution. At the moment, AI constructs from what already exists and is unable to deal with the new.

It is entirely possible that AI will relieve journalists of some of their more mundane tasks, as has been happening at PA for some years, and release them for more creative or investigative work, perhaps even helped by AI.

The potential problems are of course much greater than the sectoral preoccupations for the media industry and journalists and are not only universal but the dawning of a seismic shift every bit as profound as the internet itself.

The warnings have come loud and clear from the people who should be most listened to — those who have created this raging tiger.

Geoffrey Hinton resigned from Google to campaign against the danger of what he in part had created. Sam Altman, chief executive of OpenAI, whose ChatGPT started this particular hare running in public, has warned that regulation is needed to stop the influencing of elections and the manipulation of financial markets.

Steve Wozniak, co-founder of, and the technical brains behind Apple in the early days, notes the out of control race to produce ever more sophisticated digital minds. It cannot be stopped, but it should be regulated and all AI content clearly labelled.

ChatGPT does threaten journalism, but not in the way you might think

No one knows for sure where all of this is heading but journalists and the media have a clear responsibility to warn with as much accuracy and insight as they can muster while tracking the emerging implications. Above all they must back the need for urgent international regulation.

We are only now ever so belatedly beginning to regulate the negative manifestations of the internet.

That mistake should not be repeated with generative AI. Otherwise, “Wild West” will only be the mildest of euphemisms.

Raymond Snoddy is a media consultant, national newspaper columnist and former presenter of NewsWatch on BBC News. He writes for The Media Leader on Wednesdays — read his column here.

Raymond Snoddy is a media consultant, national newspaper columnist and former presenter of NewsWatch on BBC News. He writes for The Media Leader on Wednesdays — read his column here.