We shouldn’t buy into Musk’s ‘bots’ complaint with Twitter

Opinion

Yes, platforms can do more. Yes, watchdogs and verification companies can do more. But are you honestly doing enough to resist misinformation in online media, asks the editor.

Here’s how old I am (or how young I am, depending on who you are): my first email address was hosted by Compuserve.

Logging on to the World Wide Web (as people actually called it in the mid-90s), involved lots of beeps and honks coming from the bowels of my refrigerator-shaped computer and running the risk of my dad noticing that I was clogging up the phone line again.

Obviously much has changed since then: Altavista and Jeeves were long ago replaced by Google and chat rooms still exist but are far less popular than social media “platforms” such as Facebook, Instagram and Twitter.

But two questions have never been convincingly answered and, we now have to assume, will never be answered.

- How can you trust what you search for online is genuine?

- How can you trust the people you meet online are real? (in both senses of the word: are they humans, not robots, and are they genuine, not fraudsters?)

The issue of trust has come up again after Elon Musk decided to pause his $44bn takeover of Twitter because he is apparently suspicious that only 5% of its users are fake or ‘bots’.

It’s a special kind of genius who can become the world’s richest man, build a string of successful and pioneering companies, but then decide to pay over the odds for a company he now worries has more bots than he was led to believe. Musk’s Twitter account alone is followed by an estimated 40 million fake accounts – more than half.

This is not just a Twitter-specific problem: Meta (the artist formerly known as Facebook) regularly tells the world that the number of fake accounts it takes down every year dwarfs the 3 billion ‘real’ users it has across Facebook, Instagram and WhatsApp.

And it’s the same thing with the Open Web. For all the talk of sanctions against Russia for invading Ukraine, I haven’t heard of any investigations being launched into fraudulent online advertising from US or Europe, even though ad fraud is well known to commonly originate and divert money to state-backed Russian criminal gangs. Dentsu, by the way, was the only major global agency network that would respond to questions related to my column about this from two months ago.

As Damon Reeve, the CEO of publisher media-buying platform The Ozone Project explained recently, the way the open Web has been built has provided actual disincentives to ensure that ‘trusted’ publishers and obscure/potentially fraudulent publishers are treated differently when it comes to online advertising.

Speaking at last month’s Future of Brands event in London, Reeve said: “[Supply-side platforms] have a real interest in normalising all publishers…. the SSPs reduce premium publishers down to a Nepalese calendar website and raise lower websites, which incentivises fraud and malware.”

Isn’t this what certification bodies are for? You would think.

Over the weekend, it came to light that the Trustworthy Accountability Group, a 700+ member community that includes Google, Facebook, Amazon, The New York Times, Disney, Omnicom etc… lists a company like VDO.AI on its register as “TAG verified”.

India-based VDO.AI is a fake company, as pointed out several weeks ago by Nandini Jammi, co-founder of the Check My Ads institute. You can look up their employees on LinkedIn, but you won’t find much other than a name, job title and a headshot picture that is apparently generated by AI.

As TAG’s CEO and self-proclaimed “crimefighter” Mike Zaneis said on Twitter when challenged by adtech research EJ Gibney about this, vdo.AI is not TAG Certified, but is simply “verified” as being a real company.

Being Verified by TAG (VbT) is very different than holding a TAG Certification. The link in your screenshot provides a clear explanation of what it means … simply that they are a real corporation and have completed TAG training.

— Mike Zaneis (@mikezaneis) May 13, 2022

The 700+ member TAG community includes the world’s largest and most influential brands, agencies, publishers, and ad tech providers. It should be held to a higher standard and not simply “certifying” companies for existing. While some companies exist to provide value, others simply exist to exploit misinformation.

We also need to hold ourselves to higher standards, not just as media buyers, strategists, or publishers, but as media consumers, too. Are we really so susceptible to bullshit? If not, why are so many of us so quick to demand publishers to “moderate” content when we should be able to do this ourselves?

It seems too often that we hear about conspiracy theories and “fake news” becoming prevalent on social media and online forums, as if those things did not exist in the age of rotary-dial telephones and newspapers that required an eagle’s wingspan to hold with two hands.

The price we pay for anything resembling a free society is having to sidestep a small amount crap in our media every once in a while, just in the same way as we have to dodge the occasional doggy doodoo in the street.

I don’t want everyone on social media to have to prove their identity to satisfy Elon Musk or any other tech billionaire who has no good reason to have a copy of my drivers’ licence, any more than I would want every square-inch of public road to be surveilled by government cameras.

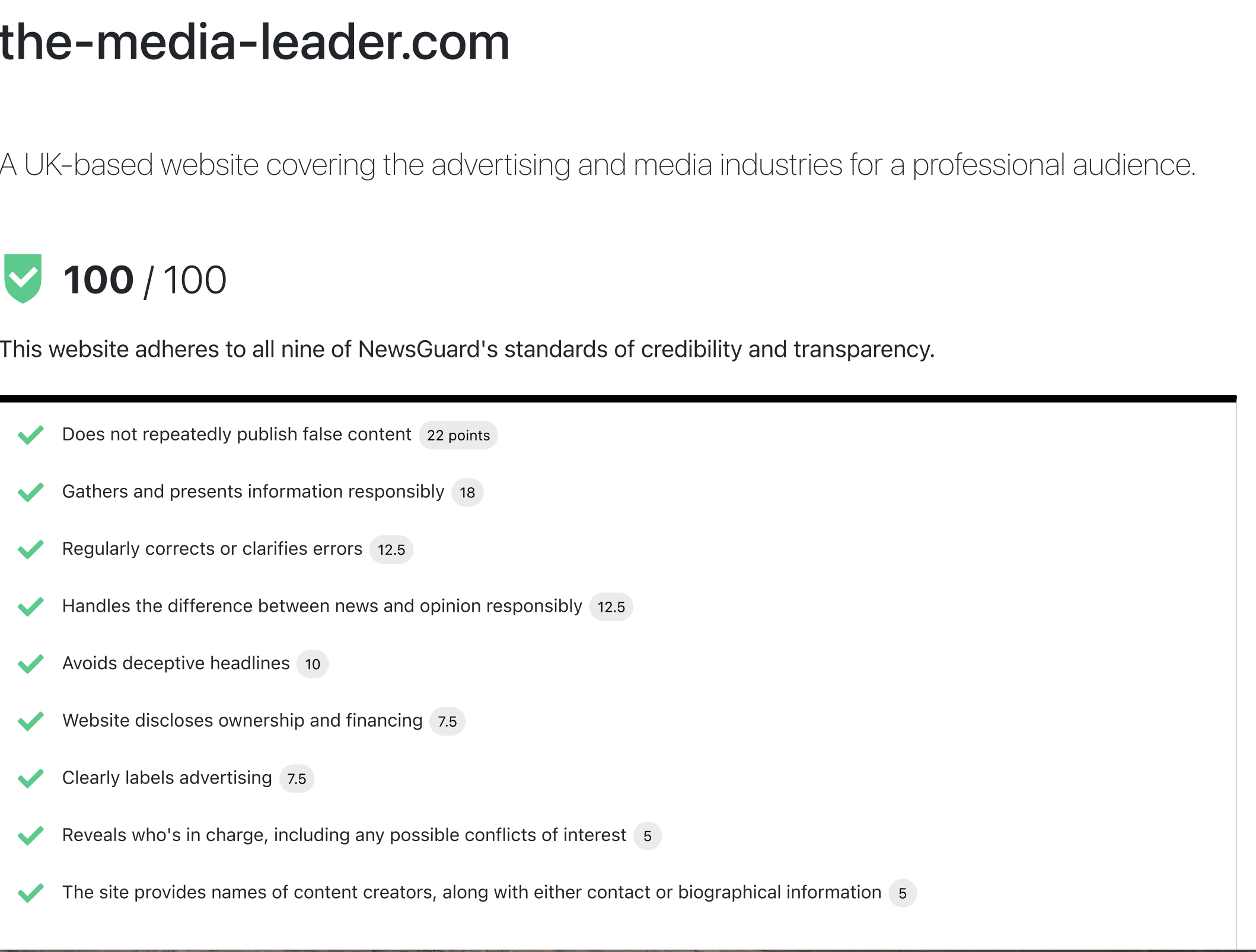

For our part, The Media Leader has just been scored full marks by NewsGuard, the journalism and tech tool that tracks online misinformation and rates news organisations for their credibility and authority. I welcomed NewsGuard to do this after our reporter Jack Benjamin’s superb recent interview with their founders.

Journalism can be a very tough business, especially when you’re trying to publish information that people and companies actively try to obstruct because they’d rather you didn’t publicise that information. We can’t always get it right; when we get it seriously wrong we’ll hold up our hands and correct the error publicly.

We’ve also recently created an About Us page which confirms our corrections policy and I’m directly contactable if you have any any complaints about our coverage. I also like compliments. A lot.